Path: blob/master/site/en-snapshot/tutorials/generative/pix2pix.ipynb

39039 views

Copyright 2019 The TensorFlow Authors.

Licensed under the Apache License, Version 2.0 (the "License");

pix2pix: Image-to-image translation with a conditional GAN

This tutorial demonstrates how to build and train a conditional generative adversarial network (cGAN) called pix2pix that learns a mapping from input images to output images, as described in Image-to-image translation with conditional adversarial networks{:.external} by Isola et al. (2017). pix2pix is not application specific—it can be applied to a wide range of tasks, including synthesizing photos from label maps, generating colorized photos from black and white images, turning Google Maps photos into aerial images, and even transforming sketches into photos.

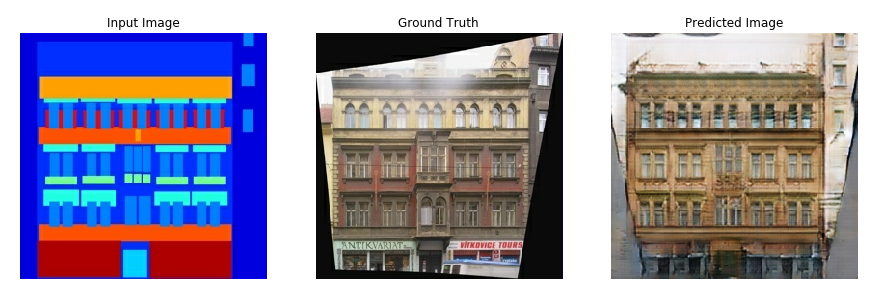

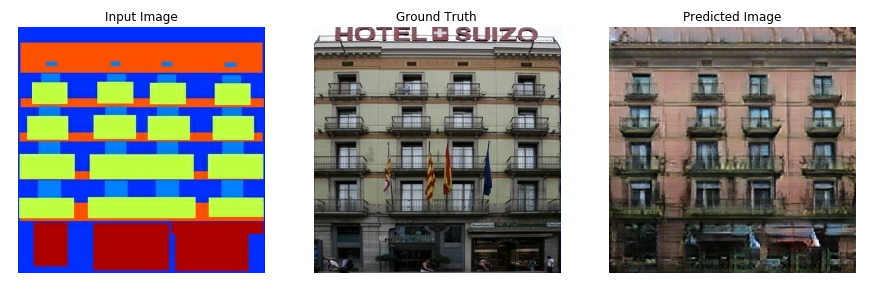

In this example, your network will generate images of building facades using the CMP Facade Database provided by the Center for Machine Perception{:.external} at the Czech Technical University in Prague{:.external}. To keep it short, you will use a preprocessed copy{:.external} of this dataset created by the pix2pix authors.

In the pix2pix cGAN, you condition on input images and generate corresponding output images. cGANs were first proposed in Conditional Generative Adversarial Nets (Mirza and Osindero, 2014)

The architecture of your network will contain:

A generator with a U-Net{:.external}-based architecture.

A discriminator represented by a convolutional PatchGAN classifier (proposed in the pix2pix paper{:.external}).

Note that each epoch can take around 15 seconds on a single V100 GPU.

Below are some examples of the output generated by the pix2pix cGAN after training for 200 epochs on the facades dataset (80k steps).

Import TensorFlow and other libraries

Load the dataset

Download the CMP Facade Database data (30MB). Additional datasets are available in the same format here{:.external}. In Colab you can select other datasets from the drop-down menu. Note that some of the other datasets are significantly larger (edges2handbags is 8GB in size).

Each original image is of size 256 x 512 containing two 256 x 256 images:

You need to separate real building facade images from the architecture label images—all of which will be of size 256 x 256.

Define a function that loads image files and outputs two image tensors:

Plot a sample of the input (architecture label image) and real (building facade photo) images:

As described in the pix2pix paper{:.external}, you need to apply random jittering and mirroring to preprocess the training set.

Define several functions that:

Resize each

256 x 256image to a larger height and width—286 x 286.Randomly crop it back to

256 x 256.Randomly flip the image horizontally i.e. left to right (random mirroring).

Normalize the images to the

[-1, 1]range.

You can inspect some of the preprocessed output:

Having checked that the loading and preprocessing works, let's define a couple of helper functions that load and preprocess the training and test sets:

Build an input pipeline with tf.data

Build the generator

The generator of your pix2pix cGAN is a modified U-Net{:.external}. A U-Net consists of an encoder (downsampler) and decoder (upsampler). (You can find out more about it in the Image segmentation tutorial and on the U-Net project website{:.external}.)

Each block in the encoder is: Convolution -> Batch normalization -> Leaky ReLU

Each block in the decoder is: Transposed convolution -> Batch normalization -> Dropout (applied to the first 3 blocks) -> ReLU

There are skip connections between the encoder and decoder (as in the U-Net).

Define the downsampler (encoder):

Define the upsampler (decoder):

Define the generator with the downsampler and the upsampler:

Visualize the generator model architecture:

Test the generator:

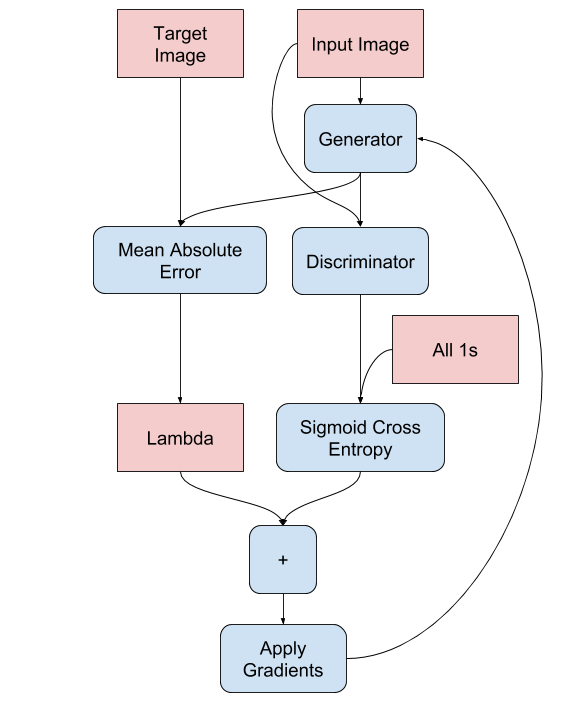

Define the generator loss

GANs learn a loss that adapts to the data, while cGANs learn a structured loss that penalizes a possible structure that differs from the network output and the target image, as described in the pix2pix paper{:.external}.

The generator loss is a sigmoid cross-entropy loss of the generated images and an array of ones.

The pix2pix paper also mentions the L1 loss, which is a MAE (mean absolute error) between the generated image and the target image.

This allows the generated image to become structurally similar to the target image.

The formula to calculate the total generator loss is

gan_loss + LAMBDA * l1_loss, whereLAMBDA = 100. This value was decided by the authors of the paper.

The training procedure for the generator is as follows:

Build the discriminator

The discriminator in the pix2pix cGAN is a convolutional PatchGAN classifier—it tries to classify if each image patch is real or not real, as described in the pix2pix paper{:.external}.

Each block in the discriminator is: Convolution -> Batch normalization -> Leaky ReLU.

The shape of the output after the last layer is

(batch_size, 30, 30, 1).Each

30 x 30image patch of the output classifies a70 x 70portion of the input image.The discriminator receives 2 inputs:

The input image and the target image, which it should classify as real.

The input image and the generated image (the output of the generator), which it should classify as fake.

Use

tf.concat([inp, tar], axis=-1)to concatenate these 2 inputs together.

Let's define the discriminator:

Visualize the discriminator model architecture:

Test the discriminator:

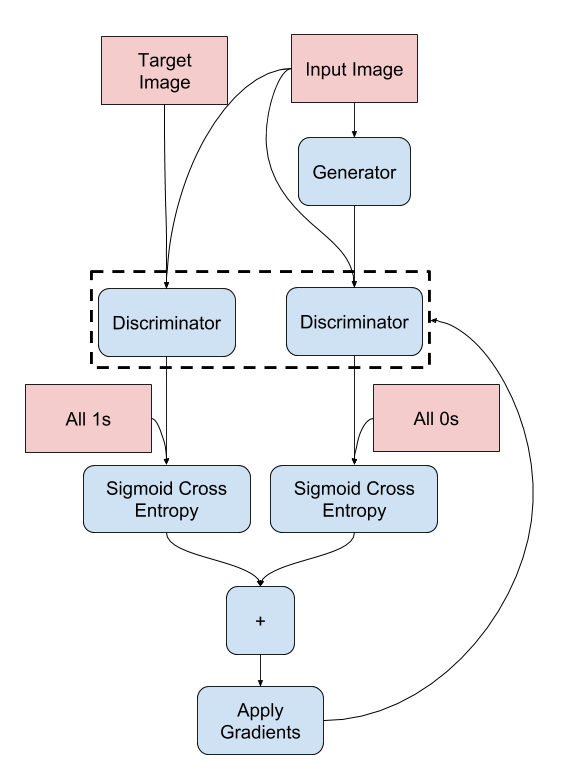

Define the discriminator loss

The

discriminator_lossfunction takes 2 inputs: real images and generated images.real_lossis a sigmoid cross-entropy loss of the real images and an array of ones(since these are the real images).generated_lossis a sigmoid cross-entropy loss of the generated images and an array of zeros (since these are the fake images).The

total_lossis the sum ofreal_lossandgenerated_loss.

The training procedure for the discriminator is shown below.

To learn more about the architecture and the hyperparameters you can refer to the pix2pix paper{:.external}.

Define the optimizers and a checkpoint-saver

Generate images

Write a function to plot some images during training.

Pass images from the test set to the generator.

The generator will then translate the input image into the output.

The last step is to plot the predictions and voila!

Note: The training=True is intentional here since you want the batch statistics, while running the model on the test dataset. If you use training=False, you get the accumulated statistics learned from the training dataset (which you don't want).

Test the function:

Training

For each example input generates an output.

The discriminator receives the

input_imageand the generated image as the first input. The second input is theinput_imageand thetarget_image.Next, calculate the generator and the discriminator loss.

Then, calculate the gradients of loss with respect to both the generator and the discriminator variables(inputs) and apply those to the optimizer.

Finally, log the losses to TensorBoard.

The actual training loop. Since this tutorial can run of more than one dataset, and the datasets vary greatly in size the training loop is setup to work in steps instead of epochs.

Iterates over the number of steps.

Every 10 steps print a dot (

.).Every 1k steps: clear the display and run

generate_imagesto show the progress.Every 5k steps: save a checkpoint.

This training loop saves logs that you can view in TensorBoard to monitor the training progress.

If you work on a local machine, you would launch a separate TensorBoard process. When working in a notebook, launch the viewer before starting the training to monitor with TensorBoard.

Launch the TensorBoard viewer (Sorry, this doesn't display on tensorflow.org):

You can view the results of a previous run of this notebook on TensorBoard.dev.

Finally, run the training loop:

Interpreting the logs is more subtle when training a GAN (or a cGAN like pix2pix) compared to a simple classification or regression model. Things to look for:

Check that neither the generator nor the discriminator model has "won". If either the

gen_gan_lossor thedisc_lossgets very low, it's an indicator that this model is dominating the other, and you are not successfully training the combined model.The value

log(2) = 0.69is a good reference point for these losses, as it indicates a perplexity of 2 - the discriminator is, on average, equally uncertain about the two options.For the

disc_loss, a value below0.69means the discriminator is doing better than random on the combined set of real and generated images.For the

gen_gan_loss, a value below0.69means the generator is doing better than random at fooling the discriminator.As training progresses, the

gen_l1_lossshould go down.

View on TensorFlow.org

View on TensorFlow.org Run in Google Colab

Run in Google Colab View source on GitHub

View source on GitHub Download notebook

Download notebook