Path: blob/master/site/en-snapshot/tutorials/generative/style_transfer.ipynb

38500 views

Copyright 2018 The TensorFlow Authors.

Neural style transfer

This tutorial uses deep learning to compose one image in the style of another image (ever wish you could paint like Picasso or Van Gogh?). This is known as neural style transfer and the technique is outlined in A Neural Algorithm of Artistic Style (Gatys et al.).

Note: This tutorial demonstrates the original style-transfer algorithm. It optimizes the image content to a particular style. Modern approaches train a model to generate the stylized image directly (similar to CycleGAN). This approach is much faster (up to 1000x).

For a simple application of style transfer with a pretrained model from TensorFlow Hub, check out the Fast style transfer for arbitrary styles tutorial that uses an arbitrary image stylization model. For an example of style transfer with TensorFlow Lite, refer to Artistic style transfer with TensorFlow Lite.

Neural style transfer is an optimization technique used to take two images—a content image and a style reference image (such as an artwork by a famous painter)—and blend them together so the output image looks like the content image, but “painted” in the style of the style reference image.

This is implemented by optimizing the output image to match the content statistics of the content image and the style statistics of the style reference image. These statistics are extracted from the images using a convolutional network.

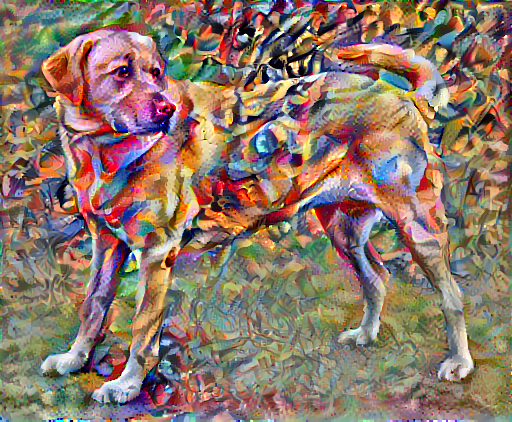

For example, let’s take an image of this dog and Wassily Kandinsky's Composition 7:

Yellow Labrador Looking, from Wikimedia Commons by Elf. License CC BY-SA 3.0

Now, what would it look like if Kandinsky decided to paint the picture of this Dog exclusively with this style? Something like this?

Setup

Import and configure modules

Download images and choose a style image and a content image:

Visualize the input

Define a function to load an image and limit its maximum dimension to 512 pixels.

Create a simple function to display an image:

Fast Style Transfer using TF-Hub

This tutorial demonstrates the original style-transfer algorithm, which optimizes the image content to a particular style. Before getting into the details, let's see how the TensorFlow Hub model does this:

Define content and style representations

Use the intermediate layers of the model to get the content and style representations of the image. Starting from the network's input layer, the first few layer activations represent low-level features like edges and textures. As you step through the network, the final few layers represent higher-level features—object parts like wheels or eyes. In this case, you are using the VGG19 network architecture, a pretrained image classification network. These intermediate layers are necessary to define the representation of content and style from the images. For an input image, try to match the corresponding style and content target representations at these intermediate layers.

Load a VGG19 and test run it on our image to ensure it's used correctly:

Now load a VGG19 without the classification head, and list the layer names

Choose intermediate layers from the network to represent the style and content of the image:

Intermediate layers for style and content

So why do these intermediate outputs within our pretrained image classification network allow us to define style and content representations?

At a high level, in order for a network to perform image classification (which this network has been trained to do), it must understand the image. This requires taking the raw image as input pixels and building an internal representation that converts the raw image pixels into a complex understanding of the features present within the image.

This is also a reason why convolutional neural networks are able to generalize well: they’re able to capture the invariances and defining features within classes (e.g. cats vs. dogs) that are agnostic to background noise and other nuisances. Thus, somewhere between where the raw image is fed into the model and the output classification label, the model serves as a complex feature extractor. By accessing intermediate layers of the model, you're able to describe the content and style of input images.

Build the model

The networks in tf.keras.applications are designed so you can easily extract the intermediate layer values using the Keras functional API.

To define a model using the functional API, specify the inputs and outputs:

model = Model(inputs, outputs)

This following function builds a VGG19 model that returns a list of intermediate layer outputs:

And to create the model:

Calculate style

The content of an image is represented by the values of the intermediate feature maps.

It turns out, the style of an image can be described by the means and correlations across the different feature maps. Calculate a Gram matrix that includes this information by taking the outer product of the feature vector with itself at each location, and averaging that outer product over all locations. This Gram matrix can be calculated for a particular layer as:

This can be implemented concisely using the tf.linalg.einsum function:

Extract style and content

Build a model that returns the style and content tensors.

When called on an image, this model returns the gram matrix (style) of the style_layers and content of the content_layers:

Run gradient descent

With this style and content extractor, you can now implement the style transfer algorithm. Do this by calculating the mean square error for your image's output relative to each target, then take the weighted sum of these losses.

Set your style and content target values:

Define a tf.Variable to contain the image to optimize. To make this quick, initialize it with the content image (the tf.Variable must be the same shape as the content image):

Since this is a float image, define a function to keep the pixel values between 0 and 1:

Create an optimizer. The paper recommends LBFGS, but Adam works okay, too:

To optimize this, use a weighted combination of the two losses to get the total loss:

Use tf.GradientTape to update the image.

Now run a few steps to test:

Since it's working, perform a longer optimization:

Total variation loss

One downside to this basic implementation is that it produces a lot of high frequency artifacts. Decrease these using an explicit regularization term on the high frequency components of the image. In style transfer, this is often called the total variation loss:

This shows how the high frequency components have increased.

Also, this high frequency component is basically an edge-detector. You can get similar output from the Sobel edge detector, for example:

The regularization loss associated with this is the sum of the squares of the values:

That demonstrated what it does. But there's no need to implement it yourself, TensorFlow includes a standard implementation:

Re-run the optimization

Choose a weight for the total_variation_loss:

Now include it in the train_step function:

Reinitialize the image-variable and the optimizer:

And run the optimization:

Finally, save the result:

Learn more

This tutorial demonstrates the original style-transfer algorithm. For a simple application of style transfer check out this tutorial to learn more about how to use the arbitrary image style transfer model from TensorFlow Hub.

View on TensorFlow.org

View on TensorFlow.org Run in Google Colab

Run in Google Colab View on GitHub

View on GitHub Download notebook

Download notebook See TF Hub model

See TF Hub model