Path: blob/main/docs/literate/src/files/differentiable_programming.jl

5591 views

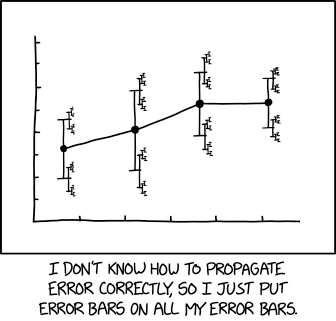

#src # Differentiable programming1using Test: @test #src23# [Julia and its ecosystem provide some tools for differentiable programming](https://sinews.siam.org/Details-Page/scientific-machine-learning-how-julia-employs-differentiable-programming-to-do-it-best).4# Trixi.jl is designed to be flexible, extendable, and composable with Julia's growing ecosystem for5# scientific computing and machine learning. Thus, the ultimate goal is to have fast implementations6# that allow automatic differentiation (AD) without too much hassle for users. If some parts do not7# meet these requirements, please feel free to open an issue or propose a fix in a PR.89# In the following, we will walk through some examples demonstrating how to differentiate through10# Trixi.jl.1112# ## Forward mode automatic differentiation1314# Trixi.jl integrates well with [ForwardDiff.jl](https://github.com/JuliaDiff/ForwardDiff.jl)15# for forward mode AD.1617# ### Computing the Jacobian18# The high-level interface to compute the Jacobian this way is [`jacobian_ad_forward`](@ref).19# First, we load the required packages and compute the Jacobian of a semidiscretization20# of the compressible Euler equations, a system of nonlinear conservation laws.2122using Trixi, LinearAlgebra, Plots2324equations = CompressibleEulerEquations2D(1.4)2526solver = DGSEM(3, flux_central)27mesh = TreeMesh((-1.0, -1.0), (1.0, 1.0), initial_refinement_level = 2, n_cells_max = 10^5,28periodicity = true)2930semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition_density_wave, solver;31boundary_conditions = boundary_condition_periodic)3233J = jacobian_ad_forward(semi);34size(J)35@test size(J) == (1024, 1024) #src3637# Next, we compute the eigenvalues of the Jacobian.3839λ = eigvals(J)40scatter(real.(λ), imag.(λ), label = "central flux")4142# As you can see here, the maximal real part is close to zero.4344relative_maximum = maximum(real, λ) / maximum(abs, λ)45@test 3.0e-10 < relative_maximum < 9.0e-10 #src4647# Interestingly, if we add dissipation by switching to the `flux_lax_friedrichs`48# at the interfaces, the maximal real part of the eigenvalues increases.4950solver = DGSEM(3, flux_lax_friedrichs)51semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition_density_wave, solver;52boundary_conditions = boundary_condition_periodic)5354J = jacobian_ad_forward(semi)55λ = eigvals(J)5657scatter!(real.(λ), imag.(λ), label = "Lax-Friedrichs flux")5859# Although the maximal real part is still somewhat small, it's larger than for60# the purely central discretization.6162relative_maximum = maximum(real, λ) / maximum(abs, λ)63@test 1.0e-5 < relative_maximum < 5.0e-5 #src6465# However, we should be careful when using this analysis, since the eigenvectors66# are not necessarily well-conditioned.6768λ, V = eigen(J)69condition_number = cond(V)70@test 1.0e6 < condition_number < 5.0e6 #src7172# In one space dimension, the situation is a bit different.7374equations = CompressibleEulerEquations1D(1.4)7576solver = DGSEM(3, flux_central)77mesh = TreeMesh((-1.0,), (1.0,), initial_refinement_level = 6, n_cells_max = 10^5,78periodicity = true)7980semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition_density_wave, solver;81boundary_conditions = boundary_condition_periodic)8283J = jacobian_ad_forward(semi)8485λ = eigvals(J)8687scatter(real.(λ), imag.(λ), label = "central flux")8889# Here, the maximal real part is basically zero to machine accuracy.9091relative_maximum = maximum(real, λ) / maximum(abs, λ)92@test 1.0e-17 < relative_maximum < 2.0e-15 #src9394# Moreover, the eigenvectors are not as ill-conditioned as in 2D.9596λ, V = eigen(J)97condition_number = cond(V)98@test 200 < condition_number < 4000 #src99100# If we add dissipation, the maximal real part is still approximately zero.101102solver = DGSEM(3, flux_lax_friedrichs)103semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition_density_wave, solver;104boundary_conditions = boundary_condition_periodic)105106J = jacobian_ad_forward(semi)107λ = eigvals(J)108109scatter!(real.(λ), imag.(λ), label = "Lax-Friedrichs flux")110111# As you can see from the plot generated above, the maximal real part is still112# basically zero to machine precision.113114relative_maximum = maximum(real, λ) / maximum(abs, λ)115@test -1.0e-15 < relative_maximum < 1.0e-15 #src116117# Let's check the condition number of the eigenvectors.118119λ, V = eigen(J)120121condition_number = cond(V)122@test 70_000 < condition_number < 200_000 #src123124# Note that the condition number of the eigenvector matrix increases but is125# still smaller than for the example in 2D.126127# ### Computing other derivatives128129# It is also possible to compute derivatives of other dependencies using AD in Trixi.jl. For example,130# you can compute the gradient of an entropy-dissipative semidiscretization with respect to the131# ideal gas constant of the compressible Euler equations as described in the following. This example132# is also available as the elixir133# [`examples/special_elixirs/elixir_euler_ad.jl`](https://github.com/trixi-framework/Trixi.jl/blob/main/examples/special_elixirs/elixir_euler_ad.jl)134135# First, we create a semidiscretization of the compressible Euler equations.136137using Trixi, LinearAlgebra, ForwardDiff138139equations = CompressibleEulerEquations2D(1.4)140141"""142initial_condition_isentropic_vortex(x, t, equations::CompressibleEulerEquations2D)143144The classical isentropic vortex test case of145- Chi-Wang Shu (1997)146Essentially Non-Oscillatory and Weighted Essentially Non-Oscillatory147Schemes for Hyperbolic Conservation Laws148[NASA/CR-97-206253](https://ntrs.nasa.gov/citations/19980007543)149"""150function initial_condition_isentropic_vortex(x, t, equations::CompressibleEulerEquations2D)151inicenter = SVector(0.0, 0.0) # initial center of the vortex152iniamplitude = 5.0 # size and strength of the vortex153154rho = 1.0 # base flow155v1 = 1.0156v2 = 1.0157vel = SVector(v1, v2)158p = 25.0159160rt = p / rho # ideal gas equation161t_loc = 0.0162163cent = inicenter + vel * t_loc # shift advection of center to handle periodic BC, but only for v1 = v2 = 1.0164cent = x - cent # distance to center point165cent = SVector(-cent[2], cent[1])166167r2 = cent[1]^2 + cent[2]^2168du = iniamplitude / (2 * π) * exp(0.5 * (1 - r2)) # vel. perturbation169dtemp = -(equations.gamma - 1) / (2 * equations.gamma * rt) * du^2 # isentropic170171rho = rho * (1 + dtemp)^(1 / (equations.gamma - 1))172vel = vel + du * cent173v1, v2 = vel174p = p * (1 + dtemp)^(equations.gamma / (equations.gamma - 1))175176prim = SVector(rho, v1, v2, p)177return prim2cons(prim, equations)178end179180mesh = TreeMesh((-1.0, -1.0), (1.0, 1.0), initial_refinement_level = 2, n_cells_max = 10^5,181periodicity = true)182183solver = DGSEM(3, flux_lax_friedrichs, VolumeIntegralFluxDifferencing(flux_ranocha))184185semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition_isentropic_vortex,186solver;187boundary_conditions = boundary_condition_periodic)188189u0_ode = Trixi.compute_coefficients(0.0, semi)190size(u0_ode)191@test size(u0_ode) == (1024,) #src192193# Next, we compute the Jacobian using `ForwardDiff.jacobian`.194195J = ForwardDiff.jacobian((du_ode, γ) -> begin196equations_inner = CompressibleEulerEquations2D(first(γ))197semi_inner = Trixi.remake(semi, equations = equations_inner,198uEltype = eltype(γ))199Trixi.rhs!(du_ode, u0_ode, semi_inner, 0.0)200end, similar(u0_ode), [1.4]); # γ needs to be an `AbstractArray`201202round.(extrema(J), sigdigits = 2)203@test round.(extrema(J), sigdigits = 2) == (-220.0, 220.0) #src204205# Note that we create a semidiscretization `semi` at first to determine the state `u0_ode` around206# which we want to perform the linearization. Next, we wrap the RHS evaluation inside a closure207# and pass that to `ForwardDiff.jacobian`. There, we need to make sure that the internal caches208# are able to store dual numbers from ForwardDiff.jl by setting `uEltype` appropriately. A similar209# approach is used by [`jacobian_ad_forward`](@ref).210211# Note that the ideal gas constant does not influence the semidiscrete rate of change of the212# density, as demonstrated by213214norm(J[1:4:end])215@test norm(J[1:4:end]) == 0.0 #src216217# Here, we used some knowledge about the internal memory layout of Trixi.jl, an array of structs218# with the conserved variables as fastest-varying index in memory.219220# ## Differentiating through a complete simulation221222# It is also possible to differentiate through a complete simulation. As an example, let's differentiate223# the total energy of a simulation using the linear scalar advection equation with respect to the224# wave number (frequency) of the initial data.225226using Trixi, OrdinaryDiffEqLowOrderRK, ForwardDiff, Plots227228function energy_at_final_time(k) # k is the wave number of the initial condition229equations = LinearScalarAdvectionEquation2D(1.0, -0.3)230mesh = TreeMesh((-1.0, -1.0), (1.0, 1.0), initial_refinement_level = 3,231n_cells_max = 10^4, periodicity = true)232solver = DGSEM(3, flux_lax_friedrichs)233initial_condition = (x, t, equation) -> begin234x_trans = Trixi.x_trans_periodic_2d(x - equation.advection_velocity * t)235return SVector(sinpi(k * sum(x_trans)))236end237semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition, solver;238uEltype = typeof(k),239boundary_conditions = boundary_condition_periodic)240ode = semidiscretize(semi, (0.0, 1.0))241sol = solve(ode, FRK65(), dt = 0.05, adaptive = false, save_everystep = false)242return Trixi.integrate(energy_total, sol.u[end], semi)243end244245k_values = range(0.9, 1.1, length = 101)246247plot(k_values, energy_at_final_time.(k_values), label = "Energy")248249# You see a plot of a curve that resembles a parabola with local maximum around `k = 1.0`.250# Why's that? Well, the domain is fixed but the wave number changes. Thus, if the wave number is251# not chosen as an integer, the initial condition will not be a smooth periodic function in the252# given domain. Hence, the dissipative surface flux (`flux_lax_friedrichs` in this example)253# will introduce more dissipation. In particular, it will introduce more dissipation for "less smooth"254# initial data, corresponding to wave numbers `k` further away from integers.255256# We can compute the discrete derivative of the energy at the final time with respect to the wave257# number `k` as follows.258259round(ForwardDiff.derivative(energy_at_final_time, 1.0), sigdigits = 2)260@test round(ForwardDiff.derivative(energy_at_final_time, 1.0), sigdigits = 2) == 1.4e-5 #src261262# This is rather small and we can treat it as zero in comparison to the value of this derivative at263# other wave numbers `k`.264265dk_values = ForwardDiff.derivative.((energy_at_final_time,), k_values);266267plot(k_values, dk_values, label = "Derivative")268269# If you remember basic calculus, a sufficient condition for a local maximum is that the first derivative270# vanishes and the second derivative is negative. We can also check this discretely.271272second_derivative = round(ForwardDiff.derivative(k -> Trixi.ForwardDiff.derivative(energy_at_final_time,273k), 1.0),274sigdigits = 2)275@test second_derivative ≈ -0.9 #src276277# Having seen this application, let's break down what happens step by step.278279function energy_at_final_time(k) # k is the wave number of the initial condition280equations = LinearScalarAdvectionEquation2D(1.0, -0.3)281mesh = TreeMesh((-1.0, -1.0), (1.0, 1.0), initial_refinement_level = 3,282n_cells_max = 10^4, periodicity = true)283solver = DGSEM(3, flux_lax_friedrichs)284initial_condition = (x, t, equation) -> begin285x_trans = Trixi.x_trans_periodic_2d(x - equation.advection_velocity * t)286return SVector(sinpi(k * sum(x_trans)))287end288semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition, solver;289uEltype = typeof(k),290boundary_conditions = boundary_condition_periodic)291ode = semidiscretize(semi, (0.0, 1.0))292sol = solve(ode, FRK65(), dt = 0.05, adaptive = false, save_everystep = false)293return Trixi.integrate(energy_total, sol.u[end], semi)294end295296k = 1.0297round(ForwardDiff.derivative(energy_at_final_time, k), sigdigits = 2)298@test round(ForwardDiff.derivative(energy_at_final_time, 1.0), sigdigits = 2) == 1.4e-5 #src299300# When calling `ForwardDiff.derivative(energy_at_final_time, k)` with `k=1.0`, ForwardDiff.jl301# will basically use the chain rule and known derivatives of existing basic functions302# to calculate the derivative of the energy at the final time with respect to the303# wave number `k` at `k0 = 1.0`. To do this, ForwardDiff.jl uses dual numbers, which304# basically store the result and its derivative w.r.t. a specified parameter at the305# same time. Thus, we need to make sure that we can treat these `ForwardDiff.Dual`306# numbers everywhere during the computation. Fortunately, generic Julia code usually307# supports these operations. The most basic problem for a developer is to ensure308# that all types are generic enough, in particular the ones of internal caches.309310# The first step in this example creates some basic ingredients of our simulation.311equations = LinearScalarAdvectionEquation2D(1.0, -0.3)312mesh = TreeMesh((-1.0, -1.0), (1.0, 1.0), initial_refinement_level = 3, n_cells_max = 10^4,313periodicity = true)314solver = DGSEM(3, flux_lax_friedrichs);315316# These do not have internal caches storing intermediate values of the numerical317# solution, so we do not need to adapt them. In fact, we could also define them318# outside of `energy_at_final_time` (but would need to take care of globals or319# wrap everything in another function).320321# Next, we define the initial condition322initial_condition = (x, t, equation) -> begin323x_trans = Trixi.x_trans_periodic_2d(x - equation.advection_velocity * t)324return SVector(sinpi(k * sum(x_trans)))325end;326# as a closure capturing the wave number `k` passed to `energy_at_final_time`.327# If you call `energy_at_final_time(1.0)`, `k` will be a `Float64`. Thus, the328# return values of `initial_condition` will be `SVector`s of `Float64`s. When329# calculating the `ForwardDiff.derivative`, `k` will be a `ForwardDiff.Dual` number.330# Hence, the `initial_condition` will return `SVector`s of `ForwardDiff.Dual`331# numbers.332333# The semidiscretization `semi` uses some internal caches to avoid repeated allocations334# and speed up the computations, e.g. for numerical fluxes at interfaces. Thus, we335# need to tell Trixi.jl to allow `ForwardDiff.Dual` numbers in these caches. That's what336# the keyword argument `uEltype=typeof(k)` in337semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition, solver;338uEltype = typeof(k),339boundary_conditions = boundary_condition_periodic)340341# does. This is basically the only part where you need to modify your standard Trixi.jl342# code to enable automatic differentiation. From there on, the remaining steps343ode = semidiscretize(semi, (0.0, 1.0))344sol = solve(ode, FRK65(), dt = 0.05, adaptive = false, save_everystep = false)345round(Trixi.integrate(energy_total, sol.u[end], semi), sigdigits = 5)346@test round(Trixi.integrate(energy_total, sol.u[end], semi), sigdigits = 5) == 0.25 #src347348# do not need any modifications since they are sufficiently generic (and enough effort349# has been spend to allow general types inside these calls).350351# ## Propagating errors using Measurements.jl352353# [](https://xkcd.com/2110/)354# "Error bars" by Randall Munroe, linked from https://xkcd.com/2110355356# Similar to AD, Trixi.jl also allows propagating uncertainties using linear error propagation357# theory via [Measurements.jl](https://github.com/JuliaPhysics/Measurements.jl).358# As an example, let's create a system representing the linear advection equation359# in 1D with an uncertain velocity. Then, we create a semidiscretization using a360# sine wave as initial condition, solve the ODE, and plot the resulting uncertainties361# in the primitive variables.362363using Trixi, OrdinaryDiffEqLowOrderRK, Measurements, Plots, LaTeXStrings364365equations = LinearScalarAdvectionEquation1D(1.0 ± 0.1)366367mesh = TreeMesh((-1.0,), (1.0,), n_cells_max = 10^5, initial_refinement_level = 5,368periodicity = true)369370solver = DGSEM(3)371372semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition_convergence_test,373solver, uEltype = Measurement{Float64},374boundary_conditions = boundary_condition_periodic)375376ode = semidiscretize(semi, (0.0, 1.5))377378sol = solve(ode, BS3(); ode_default_options()...);379380plot(sol)381382# You should see a plot where small error bars are shown around the extrema383# and larger error bars are shown in the remaining parts.384# This result is in accordance with expectations. Indeed, the uncertain propagation385# speed will affect the extrema less since the local variation of the solution is386# relatively small there. In contrast, the local variation of the solution is large387# around the turning points of the sine wave, so the uncertainties will be relatively388# large there.389390# All this is possible due to allowing generic types and having good abstractions391# in Julia that allow packages to work together seamlessly.392393# ## Finite difference approximations394395# Trixi.jl provides the convenience function [`jacobian_fd`](@ref) to approximate the Jacobian396# via central finite differences.397398using Trixi, LinearAlgebra399400equations = CompressibleEulerEquations2D(1.4)401402solver = DGSEM(3, flux_central)403404mesh = TreeMesh((-1.0, -1.0), (1.0, 1.0), initial_refinement_level = 2, n_cells_max = 10^5,405periodicity = true)406407semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition_density_wave, solver;408boundary_conditions = boundary_condition_periodic)409410J_fd = jacobian_fd(semi)411412J_ad = jacobian_ad_forward(semi)413414relative_difference = norm(J_fd - J_ad) / size(J_fd, 1)415@test relative_difference < 7.0e-7 #src416417# This discrepancy is of the expected order of magnitude for central finite difference approximations.418419# ## Automatic Jacobian sparsity detection and coloring420421# When solving large sparse nonlinear ODE systems originating from spatial discretizations422# with compact stencils such as the DG method with implicit time integrators,423# exploiting the sparsity of the Jacobian can lead to significant speedups in the Newton-Raphson solver.424# Similarly, steady-state problems can also be solved faster.425426# [Trixi.jl](https://github.com/trixi-framework/Trixi.jl) supports efficient Jacobian computations by leveraging the427# [SparseConnectivityTracer.jl](https://github.com/adrhill/SparseConnectivityTracer.jl)428# and [SparseMatrixColorings.jl](https://github.com/gdalle/SparseMatrixColorings.jl) packages.429# These tools allow to detect the sparsity pattern of the Jacobian and compute the430# optional coloring vector for efficient Jacobian evaluations.431# These are then handed over to the ODE solver from [OrdinaryDiffEq.jl](https://github.com/SciML/OrdinaryDiffEq.jl).432433# Below is a minimal example in 1D, showing how to use these packages with Trixi.jl.434# First, we define the the `equation` and `mesh` as for an ordinary simulation:435436using Trixi437438advection_velocity = 1.0439equation = LinearScalarAdvectionEquation1D(advection_velocity)440441mesh = TreeMesh((-1.0,), (1.0,), initial_refinement_level = 4, n_cells_max = 10^4,442periodicity = true)443444# We define the basic floating point type used for the actual simulation445# and construct the solver:446float_type = Float64447solver = DGSEM(polydeg = 3, surface_flux = flux_godunov, RealT = float_type)448449# Next, we set up the sparsity detection. For this we need450using SparseConnectivityTracer # For Jacobian sparsity pattern451452# We use the [global `TracerSparsityDetector()`](https://adrianhill.de/SparseConnectivityTracer.jl/stable/user/global_vs_local/) here.453jac_detector = TracerSparsityDetector()454455# Next, we retrieve the right element type corresponding to `float_type` for the Jacobian sparsity detection.456# For more details, see the API documentation of457# [`jacobian_eltype`](https://adrianhill.de/SparseConnectivityTracer.jl/stable/user/api/#SparseConnectivityTracer.jacobian_eltype).458jac_eltype = jacobian_eltype(float_type, jac_detector)459460# Now we can construct the semidiscretization for sparsity detection with `jac_eltype` as the461# datatype for the working arrays and helper datastructures.462semi_jac_type = SemidiscretizationHyperbolic(mesh, equation,463initial_condition_convergence_test, solver;464boundary_conditions = boundary_condition_periodic,465uEltype = jac_eltype) # Supply sparsity detection datatype here466467tspan = (0.0, 1.0) # Re-used later in `rhs!` evaluation468ode_jac_type = semidiscretize(semi_jac_type, tspan)469u0_ode = ode_jac_type.u0470du_ode = similar(u0_ode)471472# Wrap the RHS for sparsity detection to match the expected signature `f!(du, u)` required by473# [`jacobian_sparsity`](https://adrianhill.de/SparseConnectivityTracer.jl/stable/user/api/#ADTypes.jacobian_sparsity).474rhs_wrapped! = (du, u) -> Trixi.rhs!(du, u, semi_jac_type, tspan[1])475jac_prototype = jacobian_sparsity(rhs_wrapped!, du_ode, u0_ode, jac_detector)476477# Optionally, we can also compute the coloring vector to reduce Jacobian evaluations478# to `1 + maximum(coloring_vec)` for finite differencing and `maximum(coloring_vec)` for algorithmic differentiation.479# For this, we need480using SparseMatrixColorings481482# We partition by columns as we are using finite differencing here.483# One would also partition by columns if forward-based algorithmic differentiation were used,484# and only partition by rows if reverse-mode AD were used.485# See also [the documentation of the now deprecated SparseDiffTools.jl](https://github.com/JuliaDiff/SparseDiffTools.jl?tab=readme-ov-file#matrix-coloring) package,486# the predecessor in spirit to SparseConnectivityTracer.jl and SparseMatrixColorings.jl, for more information.487coloring_prob = ColoringProblem(; structure = :nonsymmetric, partition = :column)488coloring_alg = GreedyColoringAlgorithm(; decompression = :direct)489coloring_result = coloring(jac_prototype, coloring_prob, coloring_alg)490coloring_vec = column_colors(coloring_result)491492# Now, set up the actual semidiscretization for the simulation.493# The datatype is automatically retrieved from the solver (in this case `float_type = Float64`).494semi_float_type = SemidiscretizationHyperbolic(mesh, equation,495initial_condition_convergence_test, solver;496boundary_conditions = boundary_condition_periodic)497# Supply the sparse Jacobian prototype and the optional coloring vector.498# Internally, an [`ODEFunction`](https://docs.sciml.ai/DiffEqDocs/stable/types/ode_types/#SciMLBase.ODEFunction)499# with `jac_prototype = jac_prototype` and `colorvec = coloring_vec` is created.500ode_jac_sparse = semidiscretize(semi_float_type, tspan,501jac_prototype = jac_prototype,502colorvec = coloring_vec)503504# You can now solve the ODE problem efficiently with an implicit solver.505# Currently we are bound to finite differencing here.506using OrdinaryDiffEqSDIRK, ADTypes507sol = solve(ode_jac_sparse, TRBDF2(; autodiff = AutoFiniteDiff()), dt = 0.1,508save_everystep = false);509510# ## Linear systems511512# When a linear PDE is discretized using a linear scheme such as a standard DG method,513# the resulting semidiscretization yields an affine ODE of the form514515# ```math516# \partial_t u(t) = A u(t) - b,517# ```518519# where `A` is a linear operator ("matrix") and `b` is a vector. Trixi.jl allows you520# to obtain this linear structure in a matrix-free way by using [`linear_structure`](@ref).521# The resulting operator `A` can be used in multiplication, e.g. `mul!` from522# LinearAlgebra, converted to a sparse matrix using `sparse` from SparseArrays,523# or converted to a dense matrix using `Matrix` for detailed eigenvalue analyses.524# For example,525526using Trixi, LinearAlgebra, Plots527528equations = LinearScalarAdvectionEquation2D(1.0, -0.3)529530solver = DGSEM(3, flux_lax_friedrichs)531532mesh = TreeMesh((-1.0, -1.0), (1.0, 1.0), initial_refinement_level = 2, n_cells_max = 10^5,533periodicity = true)534535semi = SemidiscretizationHyperbolic(mesh, equations, initial_condition_convergence_test,536solver;537boundary_conditions = boundary_condition_periodic)538539A, b = linear_structure(semi)540541size(A), size(b)542@test (size(A), size(b)) == ((256, 256), (256,)) #src543544# Next, we compute the eigenvalues of the linear operator.545546λ = eigvals(Matrix(A))547548scatter(real.(λ), imag.(λ))549550# As you can see here, the maximal real part is close to machine precision.551552λ = eigvals(Matrix(A))553relative_maximum = maximum(real, λ) / maximum(abs, λ)554@test relative_maximum < 1.0e-15 #src555556# Since the linear structure defines the action of the linear matrix-alike operator `A`557# on a vector, Krylov-subspace based iterative solvers can be employed to efficiently solve558# the resulting linear system.559# For instance, one may use the [Krylov.jl](https://github.com/JuliaSmoothOptimizers/Krylov.jl) package to solve560# e.g. steady-stage problems, i.e., problems where ``\partial_t u(t) = 0``.561# Note that the present problem does not possess an actual steady state.562563# Anyways, to solve the linear system ``A u = b``, one can use for instance the GMRES method:564using Krylov565566u_steady_state, solve_stats = gmres(A, b)567568# ## Package versions569570# These results were obtained using the following versions.571572using InteractiveUtils573versioninfo()574575using Pkg576Pkg.status(["Trixi", "OrdinaryDiffEqLowOrderRK", "OrdinaryDiffEqSDIRK",577"Plots", "LaTeXStrings",578"ForwardDiff", "SparseConnectivityTracer", "SparseMatrixColorings",579"ADTypes",580"Krylov", "LinearAlgebra",581"Measurements"],582mode = PKGMODE_MANIFEST)583584585